In this 3rd & final post on hexagonal architecture I’m going to try & wrap up my opinion on how we as Testers can be confident that a reduction in integration tests will not impact the quality of the code.

The above image shows the board from the game show Blockbusters which was big in the UK in the late 80’s / early 90’s (& the US prior to that apparently). Its reason for being on this post is that it demonstrates tessellation, more importantly that in a software development team there are several hexagons which tessellate in order to deliver software.

The credit for me talking about tessellating hexagons goes to Matt Ledger. I’m not sure how the metaphor is going to pan out, but here goes…

A new level of hexagon

So we’ve been talking about hexagons in terms of code. I want to abstract out a bit & think of hexagons in terms of work that gets done in a software development project:

- project management

- business analysis

- writing production code

- writing test code

These pieces of work are done in their own hexagon:

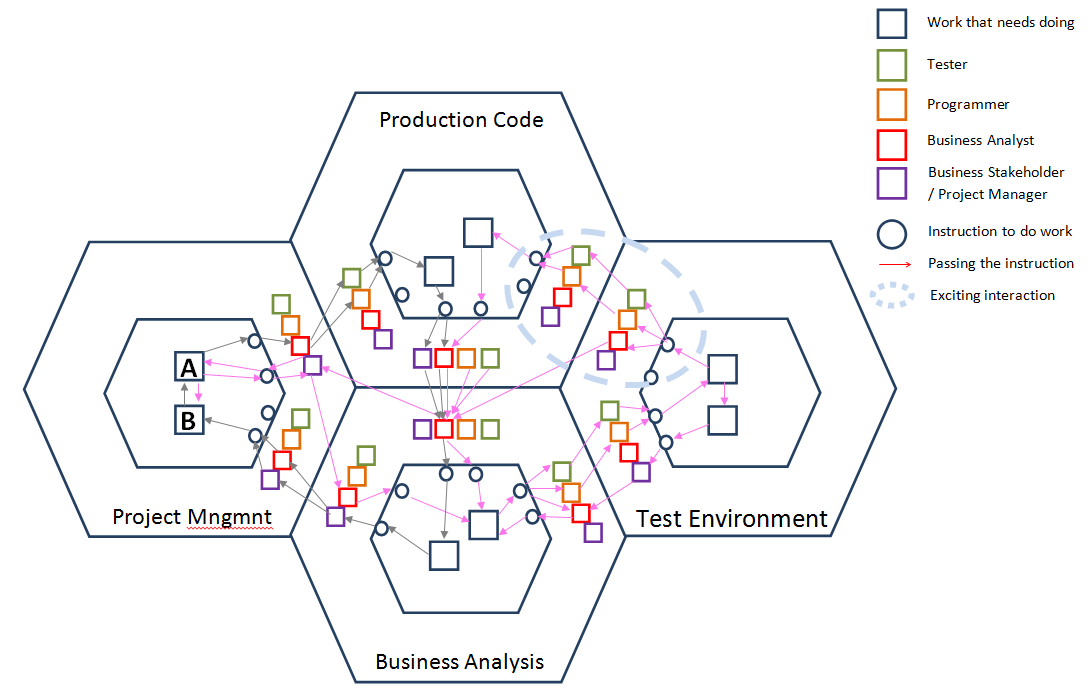

This diagram is meant to be confusing. It represents flow of conversations & instructions (messages) throughout each of the hexagons associated with a project following an agile development cycle.

For an example, lets follow the journey of the grey arrows.

There is a ‘need’ (A) from the customer in the Project Management hexagon which is quite woolly & vague. A 3×5 card outlining the need (interface) is added to a queue (backlog?) of work somewhere in the office.

A BA decides to pick up the card & see what they can do to progress it. The BA is an example of an adapter. Note that any team member (adapter) could have picked up that card.

The BA has a chat with a Tester & Programmer about the card & they decide to work on it together. Now the card is being worked in the Production hexagon. This is an example of a time-boxed spike, or proof of concept. No business analysis has been done on the card yet.

Once some work has been completed on the piece of work, the BA & Business Stakeholder &/or PM have a look at it & decide that yes, there is value in proceeding with fulfilling this ‘need’ so some business analysis work required in the Business Analysis hexagon.

Some or all of the business analysis is carried out & that is passed back to the Project Management hexagon for some project related decisions (B) to be made.

These decisions are then fed back into the original piece of work (A). This is where the grey journey ends (in my super-contrived example) & the pink journey commences.

I’ll let you follow the pink journey yourself, but have a look at the “exciting interaction” - a BA, Tester & Programmer talk about the tests & the Tester & Programmer take on the task of writing the production code together. Note - it could just as easily be a Programmer & BA or PM writing the code if lots of questions still need answering.

You get the idea - a lot of interactions happen in real-time, face-to-face between the hexagons. Who picks up the work isn’t as important as getting the work done.

So I guess I should start wrapping this post, & the series, up by answering the question:

As a Tester, how confident am I that the removal of (automated) integration tests have not decreased the stability of the code?

From having spoken to lots of people in writing these posts, I would have to say the answer is that the confidence comes from speaking to the people writing the code, building relationships & trying to understand what they do & how they do it (unfortunately for me, I knew this already, but I now know & understand hexagonal architecture a lot better!)

Understanding the architecture of the software & how the code is implemented really helps us as Testers think about how we can test the software (from a white & black box perspective) & what other areas of the code may have been impacted by a change made by a Programmer.

We Testers know how to test, so we can take what we know about testing, add it to what we now know about the architecture & feed back our ideas on how to test the feature we are discussing. When I pair with the Programmers to actually write code , we are discussing what automated tests should be written & what level they should be executed. Having a say in what automated tests are written (& how they are executed) really helps me have confidence in what the Programmers are delivering.

Programmers frequently demo their code to us & then let us play with it on their machines - for example, we prove if the tests are actually checking the code or just the data being used. (I also introduced the idea of Mutation testing to the team as a means of validating the test code. I needed Matt to implement it for me though!)

With confidence comes the issue of trust - a team must built on trust if the members of that team are to communicate openly about their work. If a team member feels that they are going to judged or derided for a piece of work they have completed, they are not going to be as willing to talk to other team members about that work. This will not help build confidence between the team members.

Similarly, if a team member feels that they shouldn’t have to show or demonstrate their work to another team member, this will also result in mistrust between team members.

As Testers, we really need to move away from the idea that we follow behind the window cleaner telling him “you’ve missed a bit”. That behaviour really does not add that much value for anyone.

Key takeaways for me from this series are:

- Communication & openness builds confidence in a team

- Trust is required in order for communication & openness to flourish

- Be positive & constructive when working with other team members

- Working together to agree automated tests & having access to those tests once written really helps me as a tester have confidence in the code being delivered & support my manual testing

- Helping to drive out requirements & write the automated tests also helps to mitigate any gaping holes in the automated test suite.

- If I see a mock, I should ask to see the contract test for that mock - this proves the mock is behaving the same as the object/interface it is pretending to be

- I need to read “Growing Object-Oriented Software, guided by tests” (GOOS)

That’s it for hexagonal architecture from me for now. Its been an interesting journey, thanks for coming with me, apologies if it didn’t go where you expected, but hopefully its still added value!