This post is WIP & under iterative development!

This is part 2 of my mini series of posts on the hexagonal architecture pattern, its testing strategy & the impact on Testers.

The first post was an attempt to explain hexagonal architecture in a language I understand.

This post is focussed on the testing strategy associated with hexagonal architecture.

I’ve tried to gather some information around test automation to support this post. The information has largely reproduced the work of Alister Scott & Lisa Crispin, but I hope by writing about it myself, not only will it help me to learn about the topic, but also the relevant reference information for this post is now only in one (other) location.

Why hexagonal architecture? Reduced coupling of course!

The use of hexagonal archictecture reduces coupling with internal & external integration points (see Kevins comment in Part 1) as well as leaks of knowledge of implementation.

So, the reduced dependancy of integration points means that we do not need to have as many integration tests. This initially made me (as a Tester) slightly uncomfortable as I felt the value of the automated regression test suite would be somewhat reduced. Aside from the technical implications, will no one think about the automation pyramid!

Rather than having acceptance/integration tests which check that a request progresses all the way through the system - from the presentation layer, through to the persistence layer - & back again, or subsections of that path, the journey is split into many short hops, or unit tests.

The problem with long integration tests is that they tend to result in dependencies between domain objects & implementation which in turns causes them to be brittle & tricky to maintain.

Before we begin, there’s not a specific testing strategy for hexagonal architecture. It’s more that hexagonal architecture enables a certain testing strategy to be executed. This article is largely focused on “Mocks” & “Self Shunting”

Acceptance & integration testing in hexagonal architecture

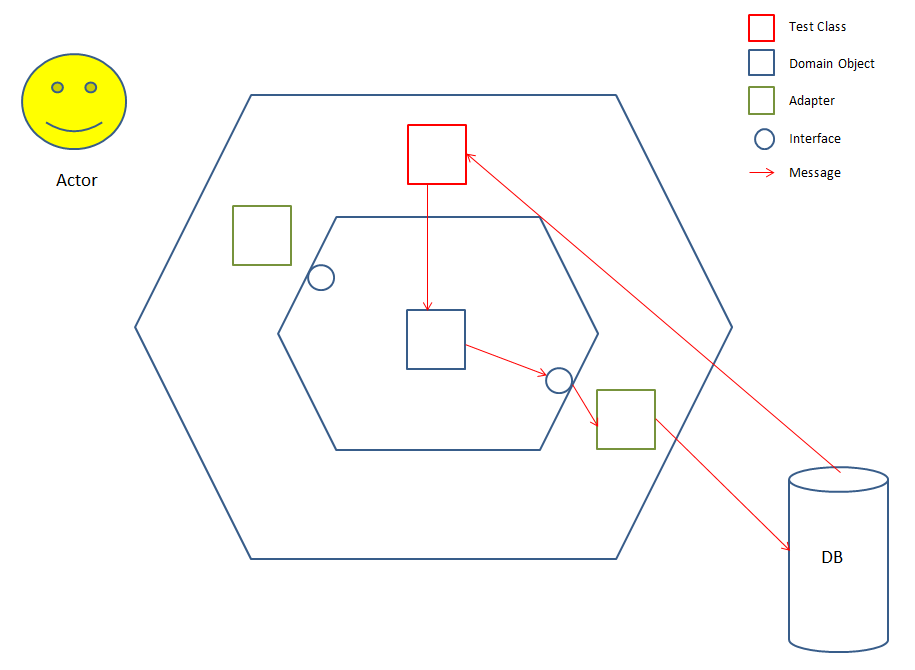

To set the scene, we consider the tests as clients of the business logic & as such they live in the outer hexagon:

In this simple (& contrived) example of an integration test, we see a message being sent to the DB & the test asserting on that message being in the DB.

From this example, we can see the test message reaching the DB is dependent on several interactions, both in the internal & outer hexagons as well on an external service.

If the assertion in the test fails due to the message not being in the DB, there are several places where the bug may be nesting. The test is also highly likely to be aware of the implementation - if we change the DB, we are going to have to change all the tests using that DB.

For an example of an acceptance test, imagine the Actor in the above diagram is a browser automation tool (such as Selenium) instead of a human - the message would still originate from the test class, but will go via the Actor & into the hexagon from there.

Unit testing in the hexagonal architecture

So ideally, we want less integration tests & more unit tests because unit tests:

- test the behaviour of the class / component rather than its implementation

- have no dependencies on other classes / components

- are easier to write & maintain than automated tests at higher levels in the pyramid (due to their independence)

- are quick to run

But as Testers, how do we get the confidence that the reduction in integration tests is not going to be to the detriment of the product/feature being tested? The answer to that question (I feel) is an understanding of & confidence in how we unit test the code. So, for the rest of this post I am going to try & explain 2 key techniques our development team currently use to unit test the code: mocking & self-shunting.

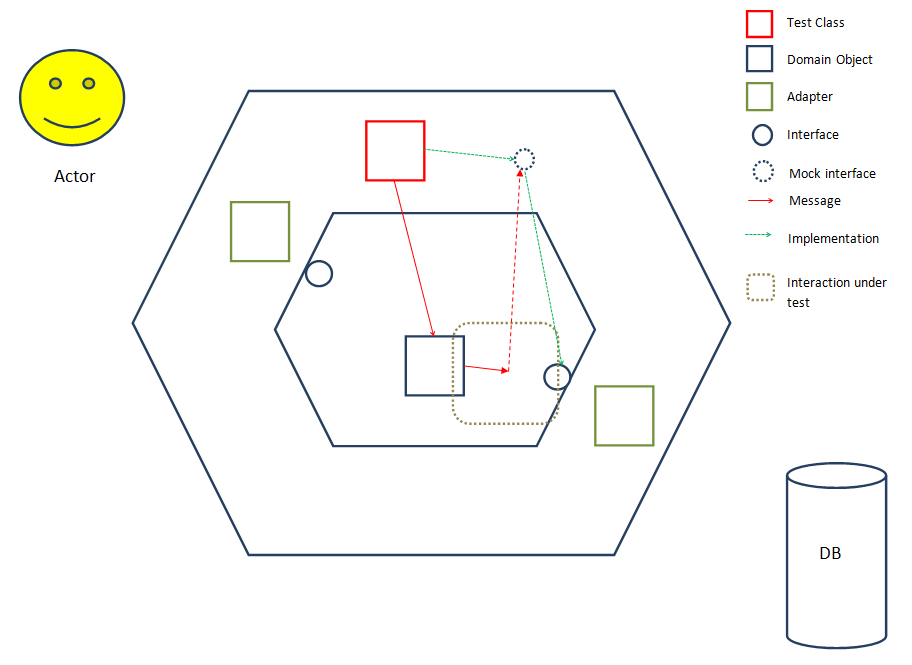

Mocking

Traditionally, mocks are instantiated & called instead of the domain interface in unit tests. Mocks:

- allow a test to assert that a domain object would call a domain interface with a certain message

- mocks implement the same interface as the actual domain object so therefore are bound by the same ‘contractual obligations’ (if you regard an interface as a contract)

- are substitutes for domain objects

I oversimplify mocking into the concept of spying - The agent (mock implementing interface) intercepts messages being sent by a mark (domain object) by pretending to be the recipient of the marks message. The government (test) is expecting feedback from the agent.

Back in the software world, when the domain object under test sends a message to what it believes is the interface, it is actually sending it to the mock which is pretending to be the interface.

So mocking enables the software to be developed & tested without dependencies on other classes / domain objects, which is great, but it has its downsides. One major problem is the (programmatic) expense of mocking & the mocking frameworks involved as it involves Reflection (don’t get too hung up on this).

How do you know that the mock is behaving the same the production code? There should be a contract test which checks that the mock is doing what it is supposed to be doing. A good rule of thumb (heuristic) is to ask to see the contract test related to a mock whenever someone says they have mocked out an interface / object.

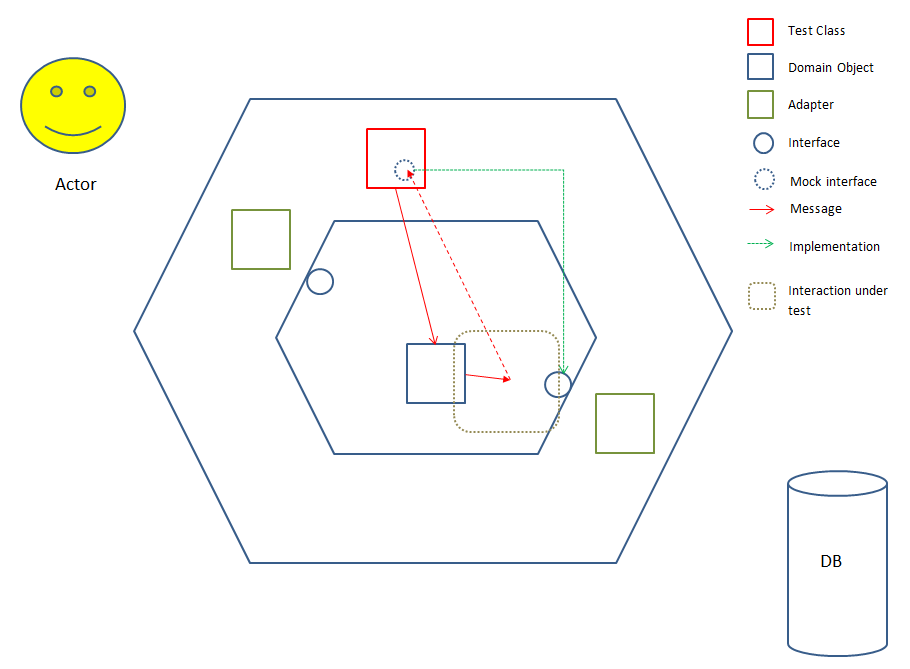

Self Shunting

What if the test could pretend to be (implement) the interface itself, & receive/intercept the messages from the domain object under test? This would reduce the need for an /expensive/ mock. Well, there is a technique & its called “Self Shunting”:

The test not only calls the domain object it is testing with the message it is asserting for, but also it pretends to be (implements) the interface which the domain object is expecting. This means that instead of the domain object calling the actual interface implementation, it will be calling the test that implements the interface - the test it was originally called with. The test can then assert on the behaviour of the domain object under test as opposed to via a mock.

To take my espionage example to the next level, the government (test) becomes the agent itself (implements the interface) & intercepts the messages being sent by the mark (domain object).

Impact on the test automation pyramid

It would appear that the bottom of the pyramid is going to get wider as more integration tests are converted into unit tests. It could even get to the stage where there are no integration tests, which would leave a gap in the pyramid between unit tests & acceptance tests (if you ignore gravity).

I’m not sure we could ever reach this nirvana of no integration tests. Its a trade-off between having a quick automation test suite & actually having confidence that the automation test suite is flexing the code enough that we as Testers don’t have to spend days & days doing potentially uneccessary manual testing. By unneccessary, I mean the kind of testing which we can automate - I’m not referring to no manual testing. I’m a big advocate of exploratory testing (largely because I’m not great at automation, but thats another topic in my syllabus!) & the value it adds to software development.

Likewise with acceptance tests - you definitely need /some/ automated journeys through the system, but you can be clever about how you write these tests so that they are not too brittle & don’t take an age to run.

Coming up…

In the 3rd & final post, I will talk more about how the different disciplines of software development fit into the hexagons to show how Testers in an agile development environment maintain an independent viewpoint whilst being integrated into the development team (Testers are wired differently).

This has been the toughest post I’ve written (hence the reason its taken so long!) so I would really appreciate some feedback from others who know far more about mocking, self-shunting & hex architecture than I do so that I can improve this post.

I’d also be really interested in hearing from anyone currently thinking about test automation & how to implement it in their development lifecycle.

Some useful links:

Mocks Aren’t Stubs (Fowler)

Shunt Pattern (Cockburn)

Self Shunt Pattern (Feathers)

Not a fan of the Self Shunt Pattern